#AISummitTalks featuring Stuart Russell, Max Tegmark, Jaan Tallinn, and many others

Check out the full recording of the second edition of #AISummitTalks below!

Last week, The much-anticipated AI Safety Summit took place at Bletchley Park, Milton Keynes, UK. For the first time at the highest level, world leaders discussed how to prevent human extinction by AI. The rest of society was, however, largely excluded from this event.

#AISummitTalks bridged this gap. The Existential Risk Observatory foundation and AI Safety startup Conjecture facilitated two public discussions (edition 1 and edition 2) between researchers, policy makers, and representatives from industry, government, and civil society.

At the second edition, on the 31st of October, right outside of the historic Bletchley Park on the eve of Summit, the calls by these leading voices to the Summit attendees were clear: Tread with caution, and make this Summit the first intergovernmental step towards preventing global catastrophe.

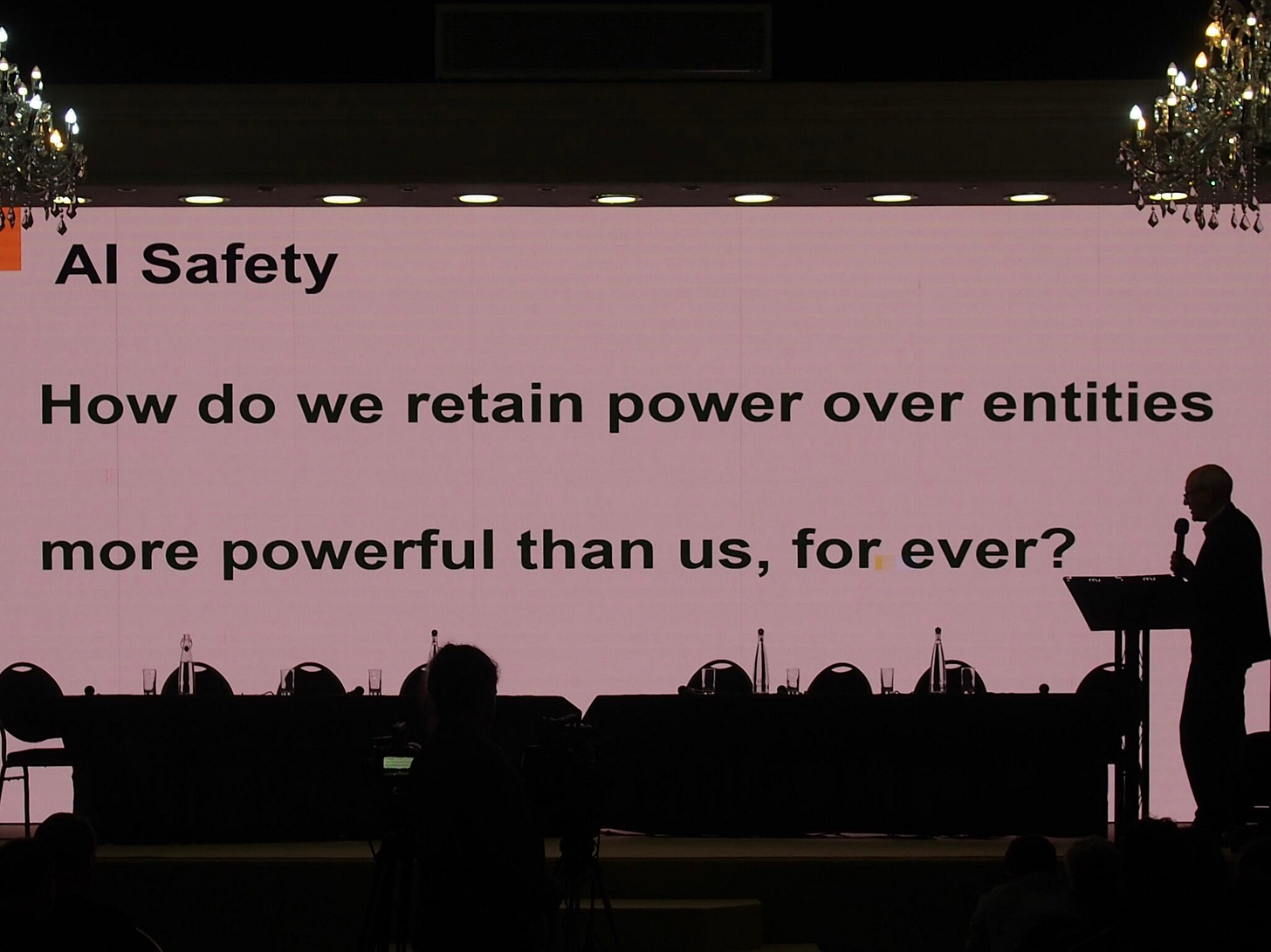

The discussions raised and addressed many questions, and expressed hopes, but also fears for the future. Connor Leahy (CEO, Conjecture CEO) highlighting the potential risks, stated: “The more advanced AI becomes, the more difficult it becomes to understand and control.” Computer scientist Prof. Stuart Russell (UCal Berkeley), said: "Forget about making AI safe; concentrate instead upon making safe AI.”

Panelist professor Max Tegmark (MIT) agreed: “Recklessly racing to more and more powerful AI systems, with no meaningful safety standards or oversight, comes with unnecessary and unacceptable risk. We should not let a handful of corporations gamble with our shared future."

This highlighted the need for resolute outcomes of this AI Safety Summit. As the first AI Safety Summit is unfolding, a declaration should pave the way for a secure future.

#AISafetyTalks: The Aftermath

Dutch journalist Stijn Bronzwaer (NRC) wrote two articles about AI Safety and the Summit. The first one can be found here, the second one here (paywall, in Dutch).

Both showcase urgent calls for governmental intervention into AI development. In "AI-expert: ‘Als de overheid niet snel ingrijpt, gaan we in de komende twaalf maanden de consequenties voelen’", Stuart Russell even said that without it, “we will be feeling the consequences in the next twelve months.”

A few memorable passages:

At Wilton Hall, Russell pointed out the potential of AI, provided governments make the right decisions now. “Think of a world where healthcare is completely optimized for every individual. Digital educators who are better, and more patient, than humans,” he said. “Or scientific breakthroughs that we cannot yet comprehend.”

But there are also risks, Russell said. Disinformation. Inequality. Cyber attacks. Military weapon systems, where computers make life and death decisions. And, Russell wondered: “What will people do when computers can soon do everything we are currently paid to do?”

“If we don't intervene now, I see two possibilities. Or a medium-sized disaster will occur, our financial system will be shut down, for example, and then we will suddenly become serious about regulation. Like what Chernobyl did for how we deal with nuclear energy. Or things go completely wrong, we lose control over our systems and there is nothing left to regulate. In films, humans are always ultimately smarter than the smartest machines. That won't work. They are smarter than us.”

How do we prevent AI from pursuing its own goals? “That is the million, billion, quintillion dollar question. How do we ensure that AI remains safe and works to our advantage? It was simple with the chess computer: we gave it a simple goal. Namely: checkmate your opponent. The problem now is that with current AI systems we do not know exactly what purpose they actually serve. Companies experimenting with self-driving cars are discovering that even with something as specific as driving a car, it is very difficult to program the purpose of the system correctly. Because that is more than just simply driving to a destination.”

“Do you know what p(doom) means?” No? “That stands for the probability of doom [the chance of the end of time]. People often ask me: what is your p(doom)? I do not like it. It is wrong to dismiss someone as a doomer. AI is like a huge boulder in the road. And the doomers are the people who say: be careful, steer to the left! This is how we prevent an accident.”

Ending on a positive note, Russell is happy that the world seems to be more receptive to the warnings for AI existential risk: “At the beginning of this year, the British government said: we do not need to regulate. Let's go all out for innovation. That has completely turned around.”

For more content from the audience at #AISummitTalks, check out for example this post, this post, this post, and this post.

Other news

Talks - On the 9th at KINO Rotterdam (that’s today!) and the 11th of November (at Slachtstraat Theater, Utrecht, ERO will talk at the screening of the film iHuman (2019). On Saturday, Joep Meindertsma of PauseAI will join our conversation about AI x-risk.

News - For NRC readers, Stijn Bronzwaer recently started a newsletter dedicated only to artificial intelligence.

Policy - A motion was tabled in the Dutch Parliament on whether “a Dutch advisory body could be of added value in developments surrounding artificial intelligence that have an impact on society.”

News - Netherlands building own version of ChatGPT amid quest for safer AI

Talks - Late October, Existential Risk Observatory’s Ruben Dieleman delivered a talk at THEA on existential risks and humanity’s long-term potential:

Donations - Have you considered donating to the Existential Risk Observatory? Existential risk awareness building is funding-constrained. With additional funding, we could operate in more countries, organize more and better events, and do more research investigating the effects of our interventions. We are sincerely happy with all support, both large and small! You can either directly contact us or donate through this link.