Season's Greetings from Existential Risk Observatory

A look back at 2023: events, actions, publications - and an invitation!

What an eventful year 2023 has been, both for the foundation and for AI Safety at large! To name a few promising developments: We witnessed the creation of the Bletchley Declaration, the Executive Order by US President Biden, the EU AI Act nearing its inception, UN Chairman Guterres, the EU Committee, and Von der Leyen all endorsing AI x-risk (state of the union), and several interpretability breakthroughs.

Our last event of 2023

Existential Risk Observatory wants to review the year with you, and look ahead at what lies beyond the AI Safety Summit and the AI Act, in Dudok, Den Haag, on the 13th of December! You are cordially invited to our upcoming event: Please sign up here!

What else did we manage to get done in 2023? Let’s take a look at the list below!

Our Message

Existential Risk Observatory appeared in various media:

Otto Barten and Roman Yampolskiy published a piece in Time Magazine on why uncontrollable AI looks more likely than ever.

Later this past year, ERO again appeared in TIME Magazine, with an article on the suggested AI Pause as the most radical and most efficient way to combat existential risks from artificial intelligence.

Beyond this, we published several other articles in news media, such as this debate in Telegraaf’s De Kwestie (Dutch), this article on the blurring border between what is real and what is AI in NRC (Dutch), this letter about why the AI Pause would be a good idea (Dutch), and this interesting opinion piece on AI in Dagblad van het Noorden (Dutch).

We also appeared on a range of podcasts, such as De Rudi & Freddie Show, AI Verkenners, and TypeOnePlanet, as well as Dutch radio programme Dit Is De Dag, and the Dutch television programme Dit Is De Kwestie, in order to explain why and how AI could pose an existential risk.

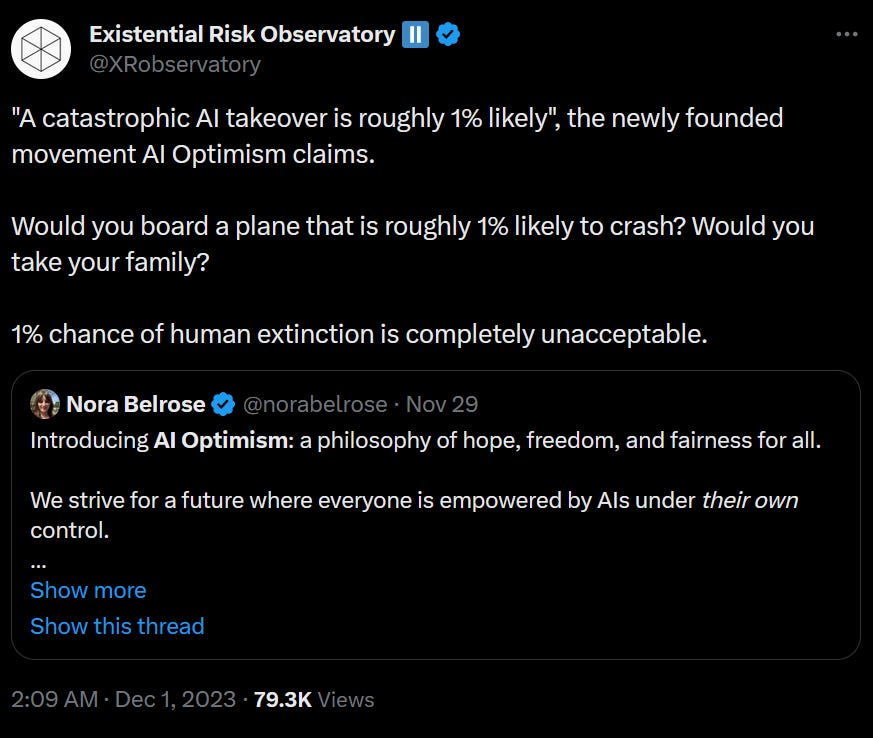

And we went viral with this hot take:

Our Policy Proposals and Other Work

Existential Risk Observatory published policy proposals, advice, and calls to action:

We published 15 policy measures to be implemented by as many countries as possible, spanning across various policy domains.

To all political parties willing to take AI x-risk seriously, we submitted the position paper “Veilige & Menselijke AI” (“Safe and Secure AI”, in Dutch).

On the eve of the Dutch general elections, we published a stemwijzer (voting guide). PvdD (Party for the Animals) definitely has the best policy proposals with regards to AI.

Our former intern Alexia published great research on the perception of AI x-risk. Learn more about how recent media items have changed people’s minds about AI x-risk, or what the American public thinks of the proposed AI moratorium.

AI Onder Controle, the petition calling on the Dutch government to act against the negative consequences to the application of (advanced) artificial intelligence, was received by the then-incumbent Commissie Digitale Zaken (Digital Affairs Committee).

Our Outreach

Existential Risk Observatory organised and participated in several events:

We crossed the Channel and organised #AISummitTalks I in London and #AISummitTalks II in Bletchley, just before the AI Safety Summit itself took off! It led to great coverage in news media, such as in Dutch newspaper NRC.

Two (nearly sold out) special events about new AI developments and x-risk at Pakhuis de Zwijger in Amsterdam, with lively discussions that followed. Find the recording of the first one here and the recording of the second one here.

We also organised workshops, talks, and screenings, for instance at EAGxBerlin, at THEA The Hague, at KINO Rotterdam, and at Slachtstraat Theater Utrecht. All of these had great turnouts and energising discussions on existential risks, and the latter one was done in collaboration with Joep Meindertsma of PauseAI.

Speaking of PauseAI: We participated in the first protests by the PauseAI movement. PoliticoEU dedicated an elaborate article to the one in Brussels. This Twitter thread from explains the reasons why protesting could be useful, and why it is timely.

And lastly…

Existential risk awareness-building is funding-constrained. With additional funding, we could operate in more countries, organize more and better events, and do more research investigating the effects of our interventions.

You can either directly contact us or donate through this link. We are sincerely thankful for your support.

Existential Risk Observatory wishes you a happy festive season! We are grateful to all of you who have engaged with us at our events, volunteered with us, supported us with your time, and everyone else that made it possible for the Existential Risk Observatory to do its work. Here’s to an exciting, safe 2024!